Hot Wheels and a Lesson in Cold Starts

TL;DR: I built a classifieds app for Hot Wheels collectors. Validated the problem, ran pilots, launched — and it died quietly from lack of critical mass. This is about doing everything “right” and ...

TL;DR: I built a classifieds app for Hot Wheels collectors. Validated the problem, ran pilots, launched — and it died quietly from lack of critical mass. This is about doing everything “right” and ...

Review of all the books I read in 2025.

And What We Actually Know About Knowledge

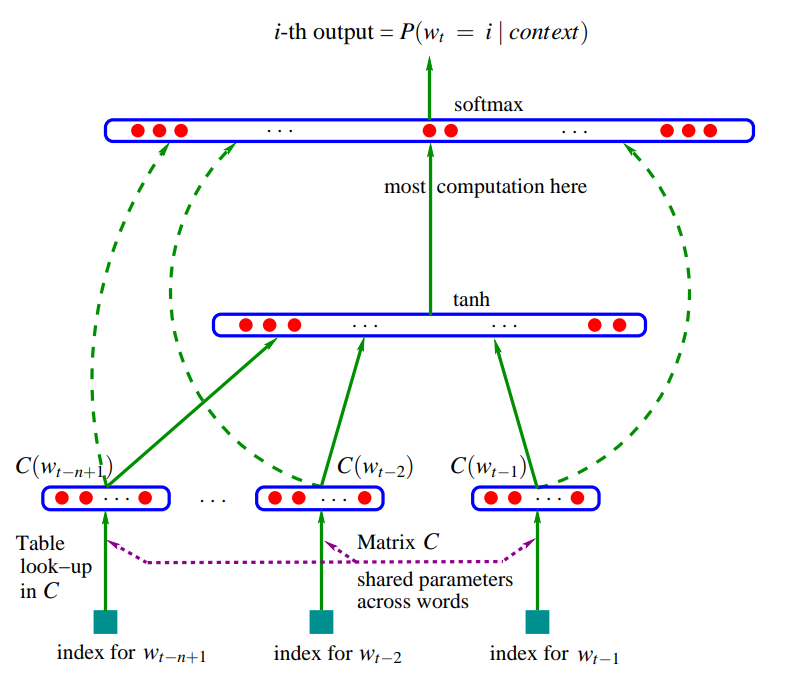

TL;DR: Yoshua Bengio’s 2003 paper “A Neural Probabilistic Language Model” is the Genesis of modern NLP. Before this paper, language models were statistical counting machines. After it, they became ...

Review of all the books I read in 2024.

ICVGIP 2024 • DOI Video Moment Retrieval (VMR) is the task of finding the specific timestamp in a video that corresponds to a natural language query. You search “the part where they explain the ...

TL;DR: Standard attention is slow not because of the math, but because of memory traffic. The $N \times N$ attention matrix gets written to and read from GPU HBM multiple times, and that data movem...

TL;DR: During autoregressive generation, a Transformer recomputes the Key and Value vectors for every previous token at every single step. This is pure waste – those vectors never change. The KV ca...

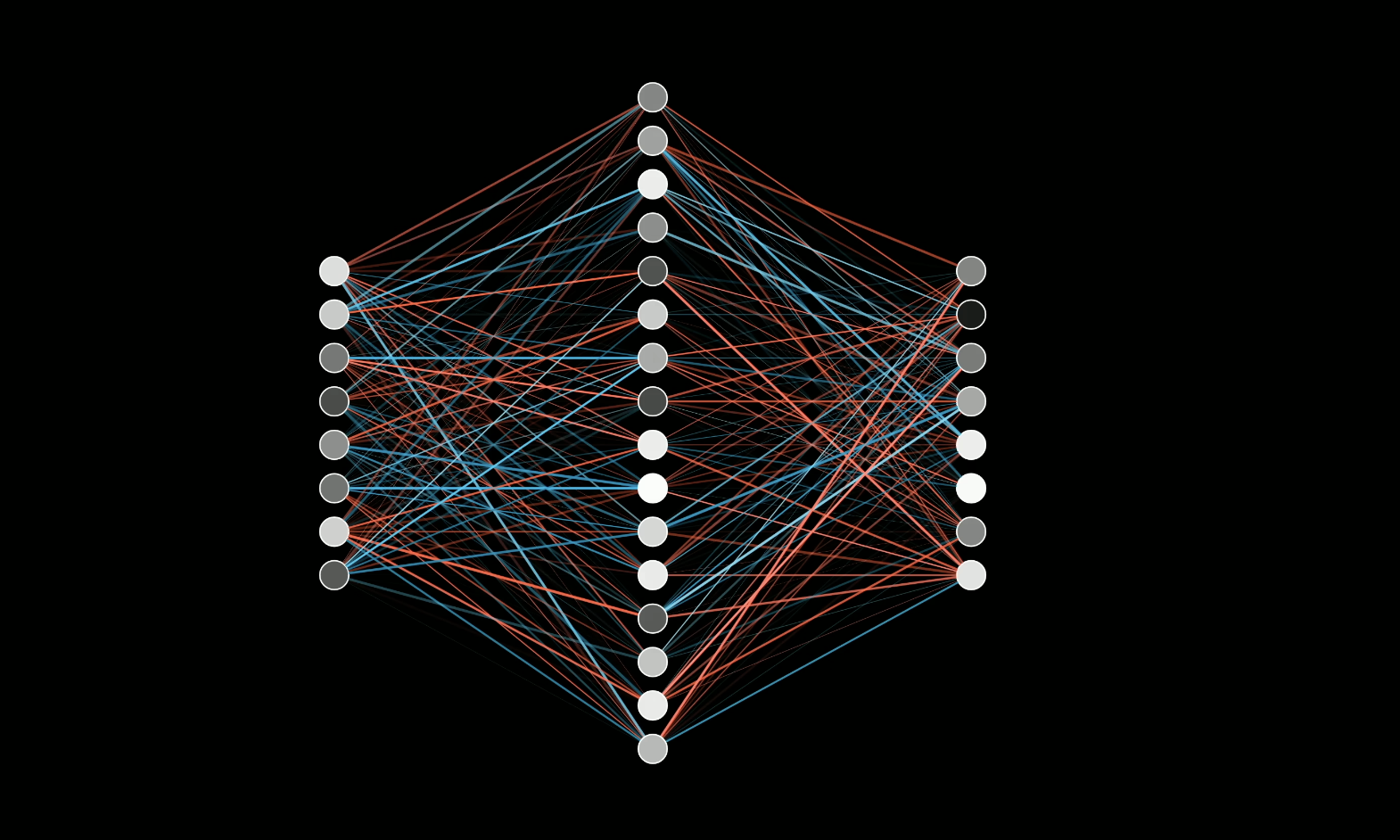

TL;DR: Attention lets tokens talk to each other. But where do the actual facts live – “Michael Jordan plays basketball,” “Paris is in France”? They live in the MLP blocks. Two matrix multiplication...

Talk Trois Log